OmniIndex Blog:

The Sovereignty Delusion: Why AI "Governance" is Just Window Dressing Without Ownership

AI Governance is a topic on everyone’s lips. From corporate press releases, to claims from AI companies and templates from influencers, everyone has an opinion. And yet, there isn’t actually too much to debate or discuss as one thing is clear:

If you don’t own the model, don’t know the data and don’t control the hardware… then you can’t govern anything.

The Illusion of Control

Many organizations rely on "Shared Responsibility" models to try and convince themselves, and their clients, that they have control. They believe that because they’ve set up some guardrails on top of a third-party LLM, like a keyword-based filter, they are enforcing governance.

The Reality Check: You are reliant on a third-party and your governance policy is like dropping rubber ducks in a fenced off stream and expecting them to stay there rather than being washed away in the powerful external current.

Why Sovereignty is the Only Path Forward

As regulations like the EU AI Act tighten and companies get more aware of what is actually happening to their data, "pretty words of reassurance" will no longer satisfy auditors, customers, or board rooms. They do not provide a verifiable audit trail, a list of sources used in the data training, nor evidence of exactly where and how your own data has been used.

This is why Sovereign AI provides the only path.

- Auditability: You can inspect the code, the training logs (including the data), and the weights.

- Security: Air-gapped environments eliminate the risk of "leakage" to the public web with the model being within your system and no data leaving its security.

- Compliance: You don't have to hope your provider is compliant; compliance is built into the infrastructure itself.

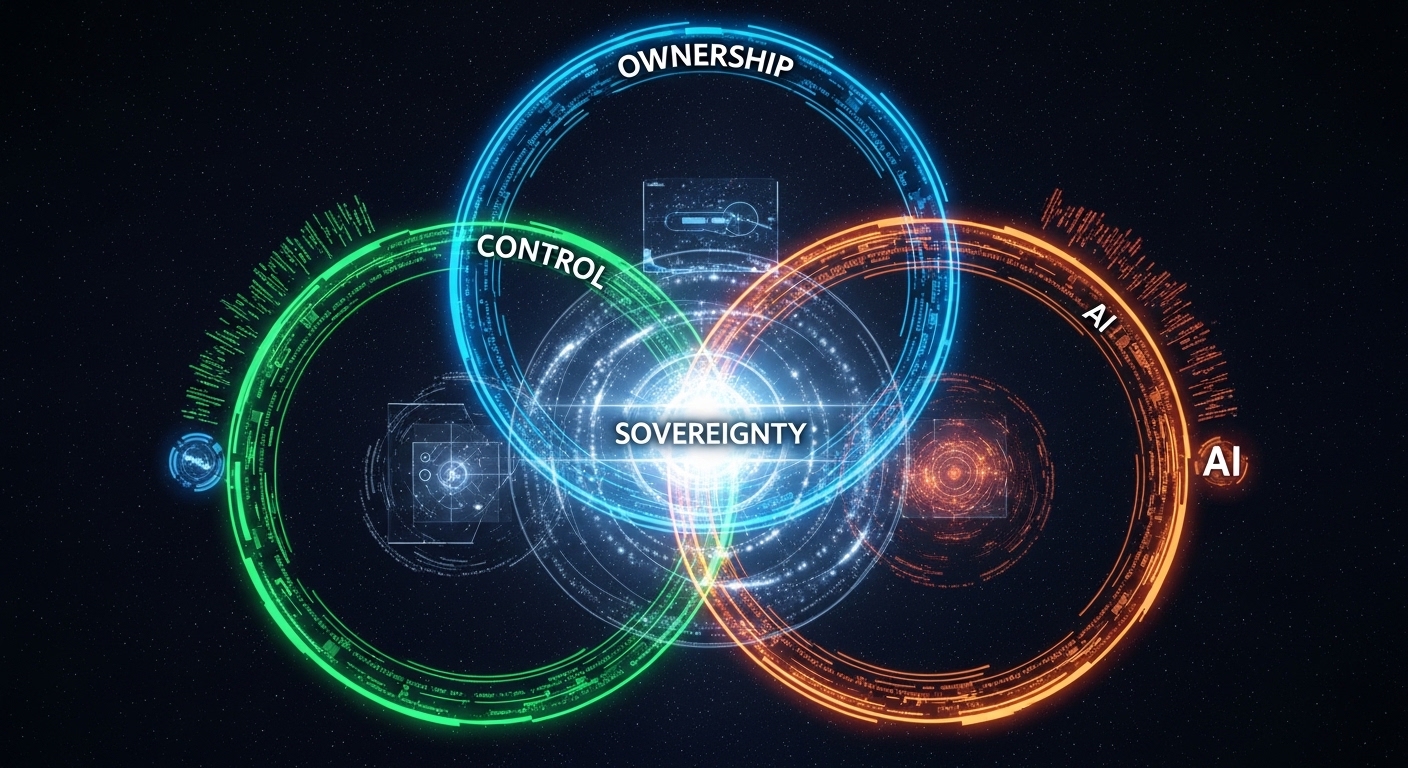

The Three Pillars of Sovereign Governance

1. Ownership of the Model

When you use a closed-source API, you do not own the model and are at the mercy of "model drift." The provider can update the model overnight, changing its behavior, bias, or accuracy without your consent. Sovereign AI means you own the specific version of the model you use. It doesn't change unless you change it.

2. Radical Data Transparency

"Trust us" is not a data policy. Governance requires knowing exactly what went into the training set and what that data’s permissions are. This ensures the model isn't built on copyright-infringing material, regulated data such PII (Personally Identifiable Information), or toxic inaccurate datasets that conflict with your organization’s ethics or corrupt the accuracy/integrity of your analysis.

3. On-Prem Execution

If your data leaves your four walls to be processed in a third-party cloud, your "governance" ends at the router. Running AI on-prem ensures that the data (and the insights derived from it) never enter a black box where they can be used to train a competitor's model.

Written by Matthew Bain, OmniIndex Head of Marketing.

All rights reserved © 2026 OmniIndex