OmniIndex Blog:

Beyond the Chatbot: Turning Every Business Interaction into Compounding Asset Memory

The AI industry is currently obsessed with ‘performance’ over ‘performance’. Too much time, energy and money is being poured into making machines sound more human, and not enough in making them optimized for the needs of business.

For a business, the value of an AI isn't found in how well it simulates human conversation. The value lies in its ability to do what humans fundamentally cannot: remember everything, link disparate ideas over time, and protect that knowledge within a sovereign environment.

The Problem with "Conversational" Cloud AI

Most popular cloud-based LLMs are designed for the "disposable" interaction. You open a tab, ask a question, receive an answer, and close the tab. While these models might offer brief memory within a single session and ingest what it wants to into its training data, this is often a simulation based on summaries rather than a deep, architectural integration of past knowledge.

In a business context, this creates a "silent tax" on intelligence. When humans leave a company, their context and insights go with them. When a project spans months, the nuances of early-stage decisions are often lost in a sea of forgotten browser tabs and unrecorded Slack messages. Cloud AI, as it exists today, mimics this human frailty: mirroring a human weakness, not solving it.

The Sovereign Alternative: Memory as Architecture

Boudica Torc shifts the focus from performance to persistence. Instead of an afterthought, memory is built into the core architecture of the system. This isn't just about "remembering the last prompt"; it is about creating a structured interaction system that turns every discussion into lasting organizational knowledge.

Boudica’s advanced conversational features illustrate exactly how business AI must differ from consumer-grade chats:

- Semantic Timelines: While a standard AI sees a prompt in isolation, Boudica Torc can show the evolution of an idea or a project across weeks and months.

- Scenario Branching: Businesses need to explore "what-if" variations without corrupting the primary record of truth. Branching allows for experimental thinking while maintaining the integrity of the original conversation.

- Collaborative Memory: In the leading cloud LLMs, your history is yours alone. In a sovereign business environment like Boudica Torc, your company workflow contributes to an institutional knowledge base that accumulates across the entire organization for enhanced understanding.

- Linked Threads: True intelligence involves connecting decisions to the discussions that birthed them. This allows the system to surface a specific compliance risk discussed months ago the moment it becomes relevant to a new query.

Security and Factuality: The Foundation of Trust

A business cannot trust a "witty" AI that hallucinates. Cloud-based models often prioritize fluency over factuality with them wishing to appear competent by giving confident best guess answers rather than admitting to not knowing. Boudica Torc implements rigorous grounding mechanisms so that it never forces an answer it knows is probably untrue.

Retrieval-Augmented Generation (RAG) is the bridge between model intelligence and factual data. By retrieving relevant context from a secure, internal knowledge base before generating an answer, the system reduces hallucinations and ensures every claim is grounded in reality. Furthermore, an accompanying Factuality Report provides a grounding score to show exactly what fraction of a response is supported by which documented evidence.

Privacy as a Controlled Feature

In the cloud, your data is often the product. In a sovereign environment like Boudica Torc, privacy is a granular control. Features like ‘Selective Privacy’ allow users to mark specific interactions as private, ensuring they are never used as context for future generations or seen by the wider organization.

Additionally, a robust data pipeline must include PII Sanitization. Before information is even ingested into the company’s knowledge base, the system can automatically redact emails, phone numbers, and sensitive financial data to maintain compliance and security.

Technical Efficiency for the Modern Enterprise

Moving away from massive, generalized cloud models toward specialized, sovereign smaller models also offers significant performance benefits. Boudica, for instance, is optimized for modern accelerators like the NVIDIA A100, achieving sub-second inference latency while being fully hosted on your own infrastructure without enterprise costs of external data centres.

Through LoRA (Low-Rank Adaptation), businesses can fine-tune these models on their specific domains with minimal computational overhead so it is tailored, adaptable and precise.

Conclusion: Asking the Right Question

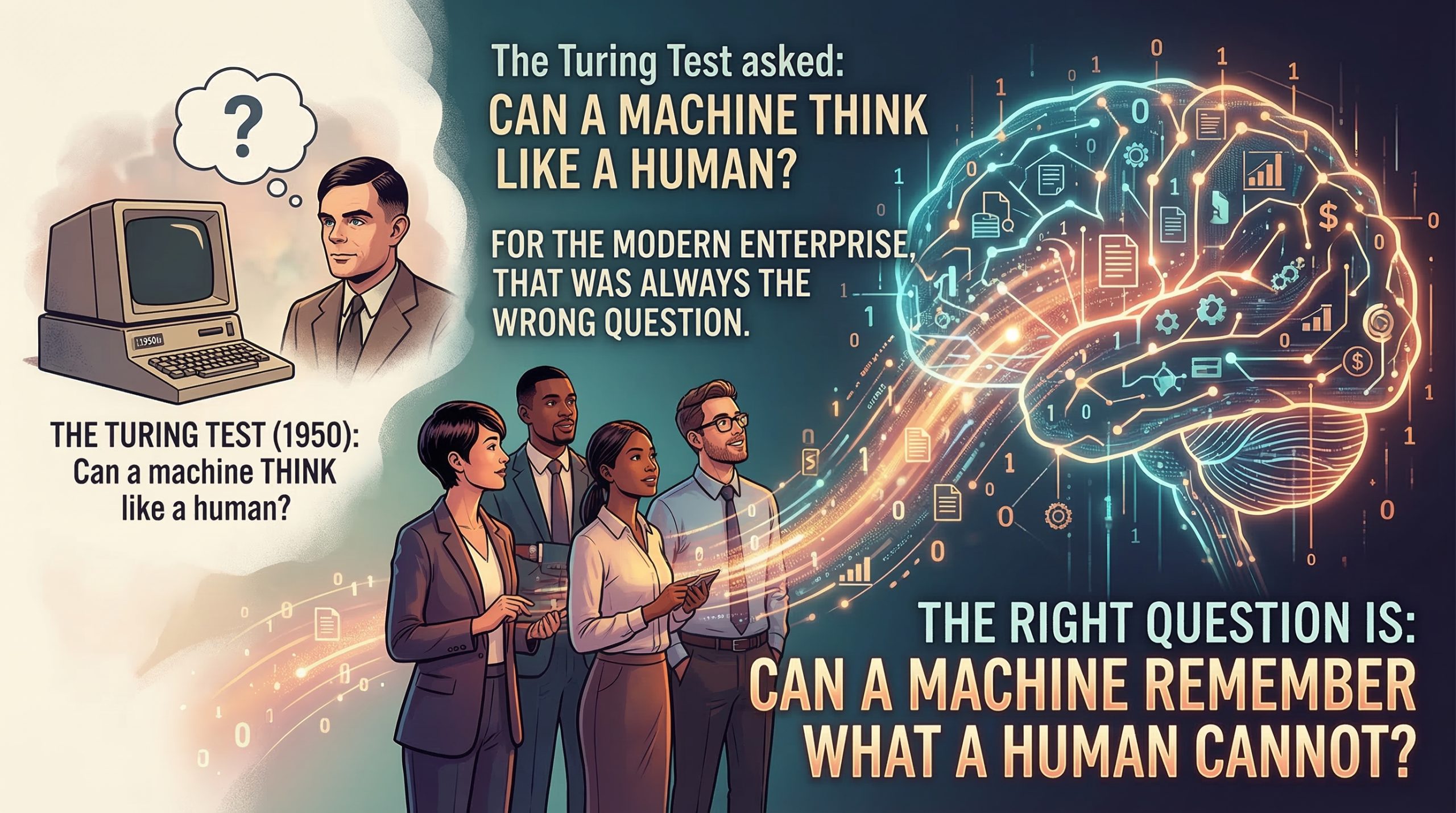

The Turing Test asked: Can a machine think like a human? For the modern enterprise, that was always the wrong question. The right question is: Can a machine remember what a human cannot?

By building systems that prioritize structured memory, factuality grounding, and sovereign data control, we can finally stop asking AI to pretend to be us and start asking it to make our collective thinking more powerful than it has ever been.

Written by Matthew Bain, OmniIndex Head of Marketing.

All rights reserved © 2026 OmniIndex